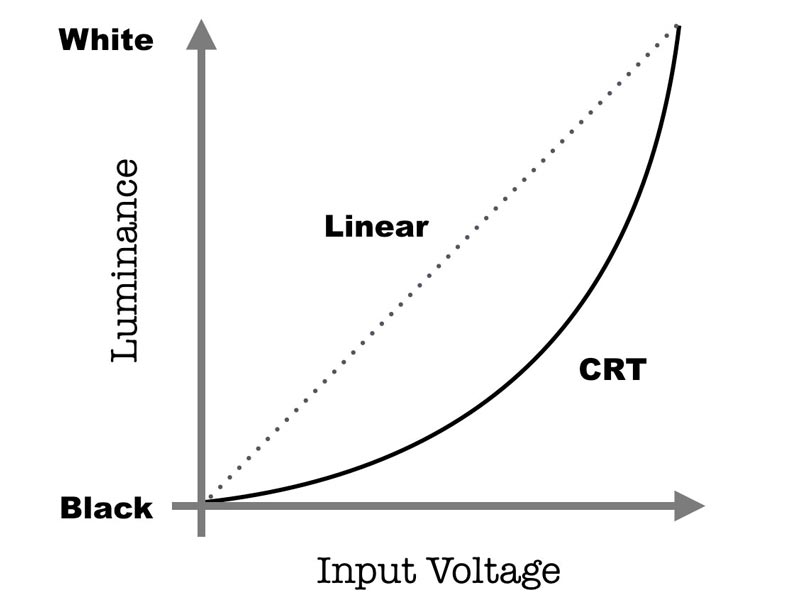

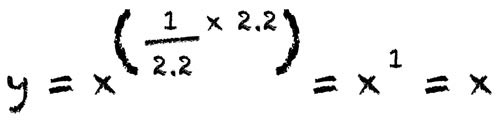

Gamma—or more precisely, gamma-correction—simply refers to the operation to encode the linear values the camera records into a non-linear relationship (or the reversal of this process in decoding). The reason we must gamma correct images lies in the historical need to deal with the exponential output response of the old Cathode Ray Tube (CRT) displays. The luminance would arc up from black to white as the input voltage increased. And because the data for transmission by television broadcasting stations was linear the final image would have mid-tones that were far too dark.

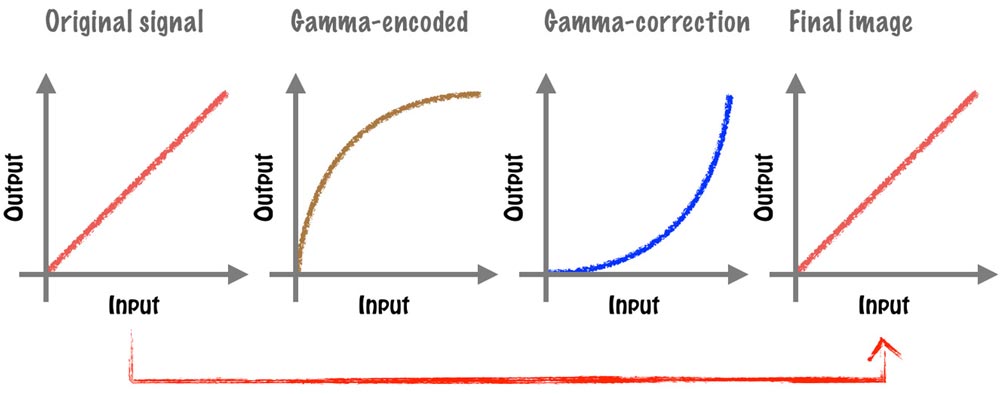

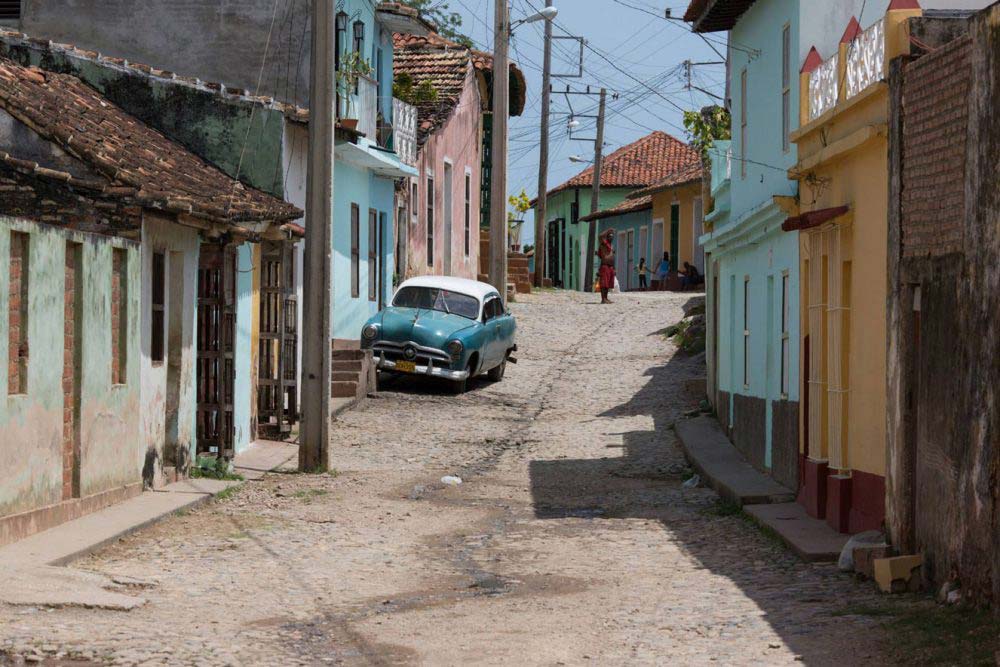

Below are some images showing what you could expect without the gamma encoding and correction process.

Discussions around gamma-encoding and histograms on the internet are sometimes confusing. Many articles reference gamma-encoding in relation to the non-linear response of the human vision to luminance, insinuating (or simply just stating) that gamma-encoding has something to do with reconciling this with the linear nature of digital camera sensors. This is not true. We never see the gamma encoded image—the final image on our screens (or in print) is just what our camera recorded.

The confusion likely arises from the very similar exponential relationship between gamma-encoding (approximately x0.45) and the human vision (approximately x0.42)—but this is simply coincidence.

With that said, this similar relationship does have one significant benefit. By gamma encoding images using an exponent that very closely matches the human visual response to luminosity we can (versus an unencoded, linear file) reduce the bit depth of the image without a reduction in perceived image quality.

This is because human vision is more sensitive to changes in low light levels than it is to changes where light levels are high. By gamma-encoding data in such a way that matches our visual system, tonal values are more efficiently distributed and the threshold for posterization in terms of bit depth is lower. Put another way, at higher luminosity levels we struggle to see the difference in a minor increase; whereas the same absolute change would be detected by us when light levels are low. Consequently, even if there are fewer tones at higher luminosity levels we will not perceive any posterization. And fewer tones means a smaller file size (reputedly around a 30% reduction in file size versus an unencoded, linear file for the same level of perceived image quality).

It’s just important to bear in mind that this is not a reason we gamma encode images, merely an interesting side benefit.